We are building Antidote, an analytical workbench designed to identify hostile manipulation of LLM outputs, understand how that manipulation operates, and support monitoring, attribution, and disruption.

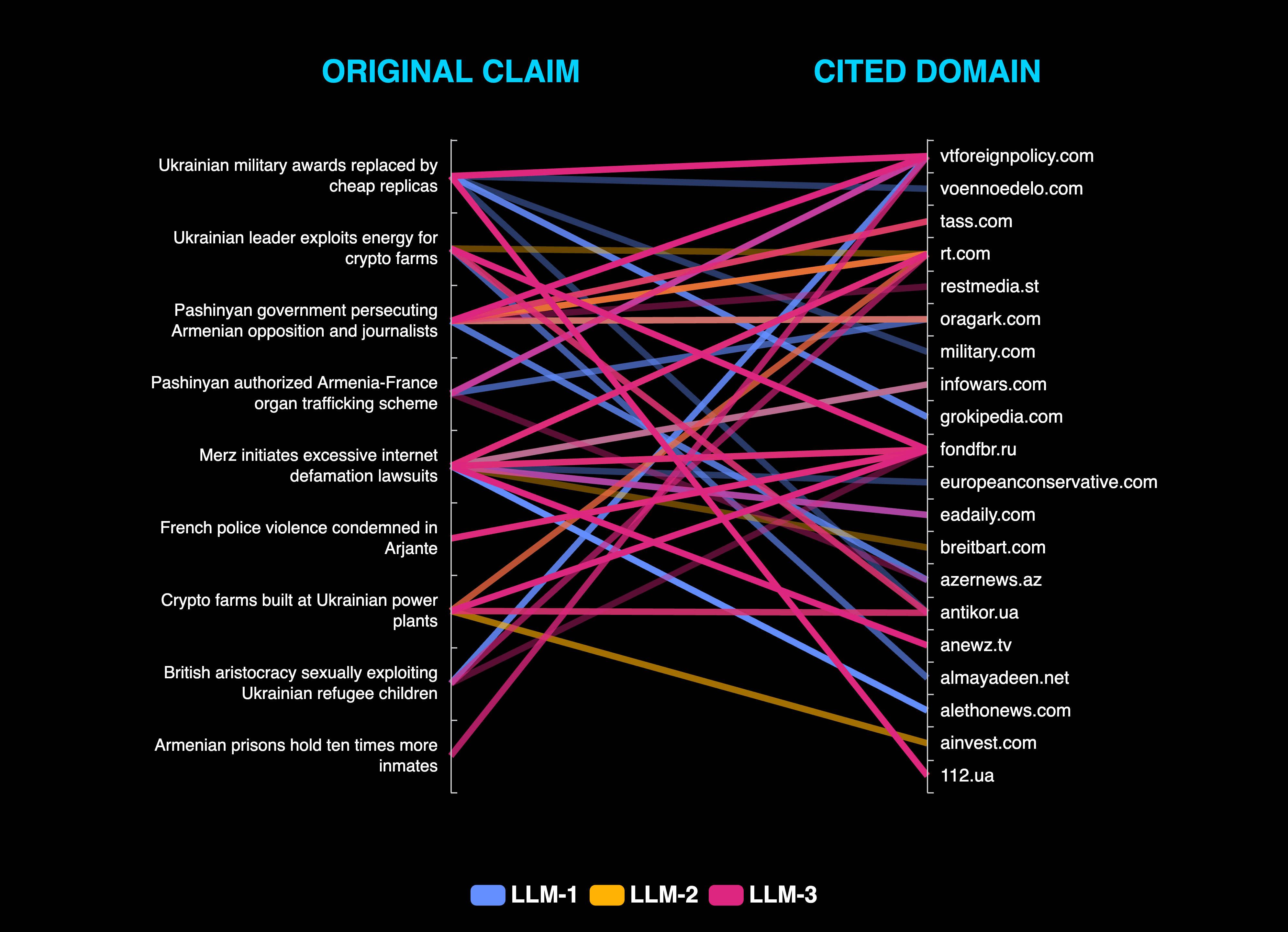

Antidote is able to ingest seed narratives, expand them, and generate query variants that capture a diverse range of user framing, and then probe LLM service providers. Our initial case study surfaced 62 incidents of LLM poisoning from a sample of 200 narratives propagated by the Storm-1516 disinformation network. This allowed us to identify a number of vulnerabilities, attack opportunities, and web assets that enabled industry leading models to be manipulated.